1. Introduction

Artificial intelligence has rapidly transitioned from a research discipline to a large-scale industrial infrastructure buildout. Over the past two years, the deployment of generative AI systems has triggered a surge in demand for compute, specialized semiconductors, hyperscale cloud capacity, and new data center architectures.

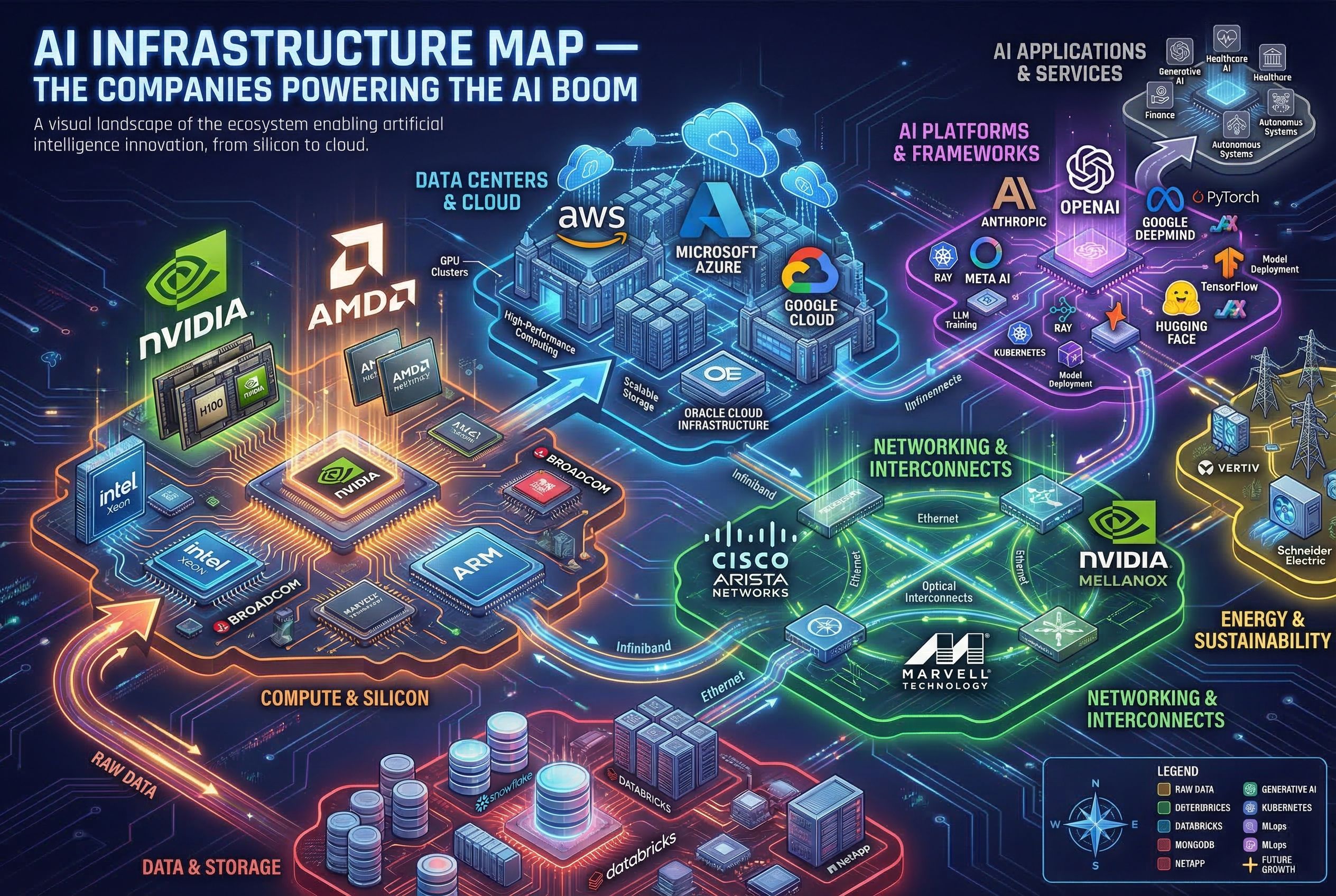

The result is the emergence of a layered AI infrastructure stack. At the bottom sit semiconductor manufacturers producing GPUs, high-bandwidth memory, and networking silicon. Above them are hyperscale cloud providers that aggregate compute into massive clusters. Surrounding these layers are networking vendors, server manufacturers, and data center operators enabling the physical expansion of AI compute.

This infrastructure is increasingly concentrated among a relatively small set of companies with the capital, engineering expertise, and supply chain access required to scale AI systems. The structure of this ecosystem offers early signals about how the economic value of the AI boom may be distributed across the technology sector.

Signal 1 — GPU Compute Becomes the Core of the AI Stack

The modern AI boom is fundamentally a compute cycle driven by accelerated processors. Graphics processing units (GPUs), originally designed for gaming and visualization, have become the dominant architecture for training and running large AI models due to their ability to process massive parallel workloads.

Companies such as Nvidia have emerged as central infrastructure providers, supplying the GPUs that power most large-scale AI training clusters. Competitors including AMD and Intel are attempting to capture share through alternative accelerator architectures, while startups and hyperscalers are investing in custom silicon.

Beyond the GPU itself, the ecosystem includes high-bandwidth memory suppliers, advanced packaging providers, and semiconductor foundries responsible for manufacturing the chips. As AI models grow larger, the importance of memory bandwidth, interconnect speeds, and chip packaging has increased significantly.

This has shifted the semiconductor industry toward an AI-centric supply chain, where demand for specific components—such as HBM memory and advanced chip packaging—has become a critical bottleneck.

Key Observation

AI infrastructure demand is increasingly constrained by specialized semiconductor components rather than general compute capacity.

Signal

Control over GPU architecture, advanced packaging, and memory supply chains may determine the long-term leadership of the AI infrastructure market.

Signal 2 — Hyperscalers Consolidate AI Compute Power

While semiconductor firms provide the building blocks of AI compute, hyperscale cloud platforms act as the primary aggregators of that capacity.

Companies such as Microsoft, Amazon, and Google have invested tens of billions of dollars in AI infrastructure, building massive GPU clusters within their global cloud networks. These platforms allow enterprises and developers to access AI compute without owning the underlying hardware.

The hyperscaler model creates a powerful feedback loop. As demand for AI services grows, cloud providers expand data center capacity and purchase more AI accelerators. In turn, access to large compute clusters enables the development of increasingly sophisticated models and applications.

This dynamic is reinforcing the strategic importance of cloud platforms in the AI economy. Rather than being purely software companies, hyperscalers are evolving into vertically integrated infrastructure providers that control compute, networking, storage, and increasingly the AI models themselves.

Smaller cloud providers and startups face structural challenges competing with these firms due to the scale of capital expenditure required to build AI clusters.

Key Observation

The AI boom is accelerating the concentration of compute infrastructure within a handful of hyperscale cloud platforms.

Signal

Hyperscalers may become the primary distribution layer for AI capabilities, shaping which models, tools, and applications gain global adoption.

Signal 3 — Data Centers and Networking Become Strategic Infrastructure

As AI workloads scale, traditional data center architecture is being redesigned around high-density compute clusters and ultra-fast networking.

AI training clusters require thousands of GPUs connected through high-speed interconnects capable of transferring enormous volumes of data with minimal latency. This has increased demand for specialized networking technologies from companies such as Broadcom, Arista Networks, and Mellanox (now part of Nvidia).

Simultaneously, the physical design of data centers is evolving. AI clusters consume significantly more power and generate more heat than traditional cloud workloads, requiring new cooling technologies and higher energy capacity.

Large technology firms and data center operators are therefore investing heavily in new facilities optimized specifically for AI workloads. These AI-focused facilities often integrate advanced networking fabrics, liquid cooling systems, and high-density server racks.

Energy infrastructure is also becoming an increasingly important component of the AI supply chain. AI data centers are rapidly becoming some of the most power-intensive computing environments ever built.

Key Observation

AI infrastructure expansion is transforming data centers from generic cloud facilities into specialized compute factories.

Signal

Energy availability, networking capacity, and physical infrastructure may become critical constraints on the long-term scaling of AI systems.

Closing Thoughts

The AI boom is often discussed in terms of applications and models, but the underlying infrastructure layer is where much of the economic and strategic power resides.

A relatively small group of companies now controls key components of the AI stack: semiconductor accelerators, hyperscale compute platforms, networking hardware, and large-scale data center infrastructure. Their ability to coordinate supply chains, deploy capital, and scale compute clusters is shaping the pace of AI development.

As the industry matures, competition is likely to intensify across multiple layers of this infrastructure—from chip design and manufacturing to cloud platforms and networking systems. At the same time, the enormous capital requirements of AI infrastructure may reinforce the dominance of firms already operating at global scale.

Understanding the structure of this infrastructure ecosystem is therefore essential for interpreting the broader trajectory of the AI economy.